A History of Audio Processing Part 7 – The Pioneers Explain How They Did It

by Jim Somich with Barry Mishkind

by Jim Somich with Barry Mishkind

[December 2019] We continue with our look at the people and the products that took audio processing from its analog mode and brought it fully into the “Digital Age.”

As in our previous installments, the late Jim Somich took the lead on this guided tour. I get to help finish it off as a tribute to a great radio engineer. Last time, we spoke with Frank Foti. This time, we speak with Bob Orban.

The bridge between analog and digital signal processing was a mixture of new technology and people with vision to apply not only what was on hand but see where things were going. Bob Orban is perhaps one of the best known of these bridges.

Analog Meets Digital

Jim Somich: Let us look back at the dawn of DSP audio processing – and Bob Orban. Bob, I always thought of you as an “analog guru.” How did you make the transition into the digital age? It seems like you got real good, real fast!

Bob Orban: I did not always write DSP code, but I did create the algorithmic architecture and did most of the coefficient computations. In other words, I created “schematic diagrams with parts values” that other engineers at Orban turned into actual code.

Bob Orban: I did not always write DSP code, but I did create the algorithmic architecture and did most of the coefficient computations. In other words, I created “schematic diagrams with parts values” that other engineers at Orban turned into actual code.

I credit my ability to learn DSP in mid-career to an excellent engineering education at Princeton and Stanford that emphasized timeless engineering fundamentals, particularly math.

Eventually. I learned DSP myself by studying textbooks and journal articles, but I couldn’t have done it without the university education that I got.

Jim Somich: The Orban 8200 Optimod was the first DSP broadcast processor in the world to achieve commercial success – and that was quite an accomplishment. Bob, what were the influences that moved Orban from being an analog company into the digital era?

Bob Orban: The 8200 project originally started as a DSP model of the Orban 424 compressor using the then-new Motorola 56001 24-bit DSP chips.

It was the Motorola 24-bit architecture that finally allowed high-quality DSP filters suitable for pro audio applications. We got far enough along with that to realize we could build a complete DSP broadcast audio processor that modeled our analog processors and had a few “DSP-only” innovations besides.

At that time, Greg Ogonowski, a longtime friend and “friendly competitor” in the Gregg Labs days, was formally hired as a consultant on the 8200 project.

We decided to make the 8200’s multi-band algorithm five-band, as it was in the Gregg

We decided to make the 8200’s multi-band algorithm five-band, as it was in the Gregg

Labs processors, instead of six-band as it has been in the XT2. Overall, though, most of the influences for the 8200 came from earlier processing I had developed, including the 8100 and the XT2.

We learned a lot doing the 8200, and I combined this with new ideas that could finally be realized because we now had enough DSP power to pull them off. The 8400 was the end result. I should add that the 8400 project was the first Orban DSP-based processor that really exploited the things that one could do in DSP that were impossible in analog.

The fully DSP Orban 8400 introduced several new features

Opening Up New Possibilities

Jim Somich: That certainly sounds like a major jump forward. What sorts of things were now possible using DSP?

Bob Orban: In my opinion, the big advantage of DSP compared to analog processing is that one can implement “look-ahead processing” economically because making delay lines is just a matter of writing data to memory and reading it out later.

By being able to “look into the future,” the DSP-based processing actually can make intelligent decisions that are impossible in analog designs.

Look-ahead limiting is just one example of look-ahead processing.

The 8400 and 8500 also use look-ahead processsing for clipping distortion control and for our “half-cosine interpolation” composite limiting, among other functions.

Another important thing we did in the 8400 was to add a speech/music detector, which allowed the processing to be optimized separately for speech and music.

Some of the most sophisticated of the oldschool, major-market processing chains actually had separate speech and music processing because these really require separate adjustments. DSP allowed us to do this automatically within one processor.

Complaints from the Field

Jim Somich: Some surprising feedback came from the field as people dealt with the effects of the look-ahead limiter. What happened?

Bob Orban: The most important decision we had to make before designing the 8400 was whether it was acceptable to make a processor with a throughput delay so long that it was impractical for talent to monitor its output through headphones when speaking. We assumed that the improvements in processing would be more important to broadcasters than the inconvenience of arranging a separate monitoring chain for talent headphones.

Unfortunately, we were surprised when the 8400 was released – we got lots of complaints about head-phone monitoring. Accordingly, in Version 2.0 of the 8400 software, we cut the delay in half without compromising the lookahead algorithms by looking at every delay in the chain and getting rid of the ones that were not actually necessary to implement the lookahead processing. We also allowed users to configure the 8400 to emit a low-delay headphone monitor signal from an unused output.

When we designed the 8500, which maintained a 64 kHz minimum sample rate (as opposed to 32 kHz in the 8400), we further reduced delay by about another 4 ms by eliminating 64/32 and 32/64 kHz sample rate conversions in the signal path.

However, even with all this effort, the bestquality processing available in the 8500, using look-ahead in the most favorable way to reduce distortion, exhibits a 37 ms delay – which is too long for headphone monitoring.

Fortunately, most of the advantages of lookahead processing are available with a 17 ms delay, which is the delay of most of the 8500 factory presets. Additionally, we made available a separate ultra-low-latency processing chain without look-ahead for those applications where the low delay was considered necessary, such as remote off-air cueing.

Jumping Ahead

Barry Mishkind: Hi Bob. Please allow me to jump in here, in 2019, When you and Jim spoke, some big changes seemed to be happening in audio processing. As we look back over those past ten years, what do you see as the most important advances in your processing products?

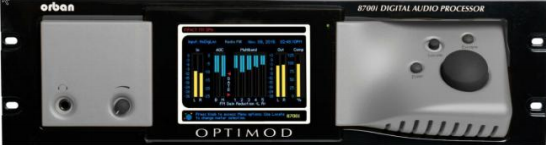

Bob Orban: From my perspective, the most important advance in Orban’s processing in the last 10 years is the 2010 development of the MX peak limiter, first introduced in Optimod-FM 8600 and improved just a few months ago with the release of MX+ technology for the 8700i.

The MX limiter is program-adaptive and uses a psychoacoustic model to assess when clipping distortion would be potential audible, allowing the limiter to use various strategies to avoid it.

Barry Mishkind: Cleaner audio is always better, something to which the Optimod series has definitely given a lot of attention.

Bob Orban: Compared to older Orban peak limiter technologies, the MX limiter reduces audible distortion, increases transient punch, and increases high frequency headroom. We recently applied this technology to AM processing as well, in our XPN-AM, and the 1600PCn streaming and mastering software includes MX limiting for “flat” transmission channels.

For various reasons, including implementing the psychoacoustic model, the MX limiter has an intrinsic delay in the order of 200 ms. This is way too long for headphone monitoring, so we had to put more effort into implementing a 4 separate headphone monitoring chain to feed the in-studio headphone monitoring system.

We were able to fit a complete Ultra-Low-Latency processing chain (roughly equivalent to an 8200) into the 8600 and 8700i’s DSP and run it in parallel with the on-air chain. This includes a distortion-cancelling FM clipper.

The total delay is about 4 ms (very headphonefriendly) although this is somewhat frequencydependent. Of course, the advent of HD Radio made processing delay irrelevant anyway because of the diversity delay in the HD system’s analog FM path.

If a Legacy Sound is Wanted

By the way, in the 8600 and 8700i we retained the ability to run presets that use the older 8200- style and 8500-style processing, although of course they don’t perform as well as MX presets.

These can be used if a station is not running HD and needs low delay for off-air headphone cueing. Moreover, with the increased delays in other parts of the chain (such as digital STLs and exciters), the rest of the chain may be a limiting factor if a station wants to have talent monitor off-air.

The Multipath Mitigator

Barry Mishkind: You also mentioned another feature that sounds exciting in the way it reduces artifacts.

Bob Orban: Yes, the second most important recent advance in our FM processing is the “Multipath Mitigator” phase corrector, which eliminates interchannel phase shifts to minimize L-R energy, thereby minimizing multipath.

Barry Mishkind: Now that is indeed something that definitely will help a lot of stations in many different environments. Multipath has long been an irritation to engineers and listeners alike.

Anything else new over the last ten years we should mention?

Bob Orban: Some of the other features we added to our flagship FM processing include musically-correct subharmonic synthesis, improved bass limiting, a streaming monitor that can allow stations to hear the processor’s output over the Internet, and Xponential Loudness, which is a psychoacoustic process that improves the sound of “hypercompressed” source material and brings out detail in the audio.

Processor Platforms

Barry Mishkind: Something else has changed over the past decade: a shift in the different platforms upon which processors function, right?

Bob Orban: Ever since the 8200, Orban FM processors have been software-based but have run on dedicated hardware.

Over the past ten years, expanding our software technology so that it can run on third-party platforms (including virtual machines as well as from the cloud) has been evolutionary.

By the way, I personally wrote all the DSP code in the 1600PC and the Optimod XPN-AM products, albeit in a high-level language, unlike the assembly language used for the Motorola/Freestyle 56xxx DSPs.

Barry Mishkind: Evolutionary and, perhaps even, revolutionary. As I recall, your “Orban Inside” broadcast processors were the first to actually fit right inside transmitters. And, with the 1600PCn, we now see a number of processsors that fit on laptops, along with virtual consoles.

Of course, the increasing capabilities of software platforms does raise a question about how the audio is handled. From the early days of single band processing, we have seen two-band, three-band, four-band, five-band … all the way up to 36-band processing. Do you feel we need that many bands?

Bob Orban: No. The more independent bands you use, the less predictable the peak output level of the summed bands becomes, so this puts a greater and greater onus on the peak limiter to accommodate the wide variation in the amount of peak limiting that must occur.

While this can be ameliorated to some degree by various band-coupling schemes and by adapting compression thresholds to the amount of peak limiting required, this coupling reduces the independence of the bands and thus works at cross-purposes to having a high band count. Additionally, large numbers of bands tend to “homogenize” the sound, and the narrowband filters required can cause audible ringing.

Our experience is that when the band compressors are optimally designed, five bands is plenty to avoid audible spectral gain intermodulation (audible pumping of the loudness of one program element due to other elements, a wideband example being pumping of vocals by heavy bass). We feel that five bands provides an optimum tradeoff between source-to-source consistency, distortion control, and preservation of the musical character of the original program material.

More than anything, band-count is a “marketing number,” like “horsepower” and “Watts,” and is just one of a huge number of design details relevant to the sound of a processor.

QUALITY?

Barry Mishkind: Another aspect of the computer power that has been brought to bear on audio processing leads to this question I have to ask: Have MP3s killed the hearing of the generation – or can they still appreciate good audio?

Bob Orban: According to Sean Olive, despite concerns about MP3 pollution, research using double-blind listening tests shows that young people can still appreciate high quality audio. (See this blog entry)

The principal takeaway is: “When all 12 trials were tabulated across all listeners, the high school students preferred the lossless CD format over the MP3 version in 67% of the trials. The CD format was preferred in 145 of 216 trials (p<0.001).”

Coming Attractions

Barry Mishkind: At this point, now, Jim would want me to ask: Would you care to take out your crystal ball and give us a few predictions on what we can expect in the future?

Bob Orban: I cannot publicly comment on research we’re currently doing regarding processsing algorithms. However regarding design of broadcast facilities, I expect that processors will become more and more virtualized and embedded in hardware that also performs other functions in the signal chain.

And, of course, Audio-Over-IP (AoIP) connectivity will continue to be more and more important throughout the audio production ecosystem, including broadcast.

Standards

Barry Mishkind: Speaking of the huge move to AoIP in broadcast plants, what can you say about standards we are seeing, and might see?

Bob Orban: The Audio Engineering Society (AES) is doing a lot of important work to make standardized recommendations for the target loudness of digital media, particularly streaming, and I am an active participant in this effort.

This has the potential to improve the streaming experience by allowing enough headroom to avoid excessive peak limiting and reducing the need for listeners to adjust their volume controls.

All of Orban’s processors for “flat” transmission channels include loudness controllers that use both Jones & Torick (CBS) and ITU-R BS.1770 loudness control and metering technologies, and our processors make it easy for the user to specify a target loudness and comply with it.

Additionally, the AES is on the forefront of educational efforts to try to reduce the amount of “hypercompression” applied in audio mastering.

Most of the big streaming services are now loudness-normalizing their files, so excessive peak limiting no longer gives material a loudness advantage in these services. This reduces the motivation to hypercompress masters. This is good news for radio broadcasters who are tired of dealing with source material that comes “pre-distorted” from the labels.

Barry Mishkind: Bob, I want to thank you sincerely for taking the time to revisit this topic of high interest among broadcasters, as well as for all the important innovations you have brought to our industry.

– – –

Jim Somich passed away suddenly early in 2007. He always was actively interested in audio processing, always pushing the envelope at his company.

Jim was also very generous with his time, making room to help a number of broadcast engineers to get started. From my point of view, it was his input that made this series possible. – Barry Mishkind

– – –

Are articles like this helpful to you? If so, you are invited to sign up for the one-time-a-week.BDR Newsletter.

It takes only 30 seconds by clicking here.

– – –